*NEW* Multi Token Prediction Just Made Local Agents Running in vLLM ~3x Faster

A working, easy to follow guide to Multi Token Prediction (MTP) in vLLM, with real benchmarks on Qwen 3.6, Gemma 4, and DeepSeek V4, for anyone serving LOCAL large language models in 2026

If you are in any community on twitter or any subreddit talking about building Local AI, i can guarantee this letter will be one of the best places you could possibly start. Might even be all you need for MTP…

FORWARD

For two years, the bottleneck on local large language models has not been quality. It has been throughput.

You can run Qwen 3.6 or Gemma 4 on a single RTX 3090 (although I would say a 3090 is the absolute floor right now for usable Local AI) and get answers that match frontier class output on most everyday tasks. The problem has been waiting forty seconds for the model to finish writing them (aka latency).

That bottleneck quietly broke in the last three weeks. The technique behind it is called Multi-Token Prediction, or MTP, and vLLM is now the production answer for running it at speed…

This is a working guide to what MTP is, why draft acceptance rate is the only number you should care about, what real users are getting on 24GB, 48GB, 80GB, and AMD ROCm hardware, and how to actually serve it from your own machine today using vLLM.

WHAT MULTI-TOKEN PREDICTION ACTUALLY IS

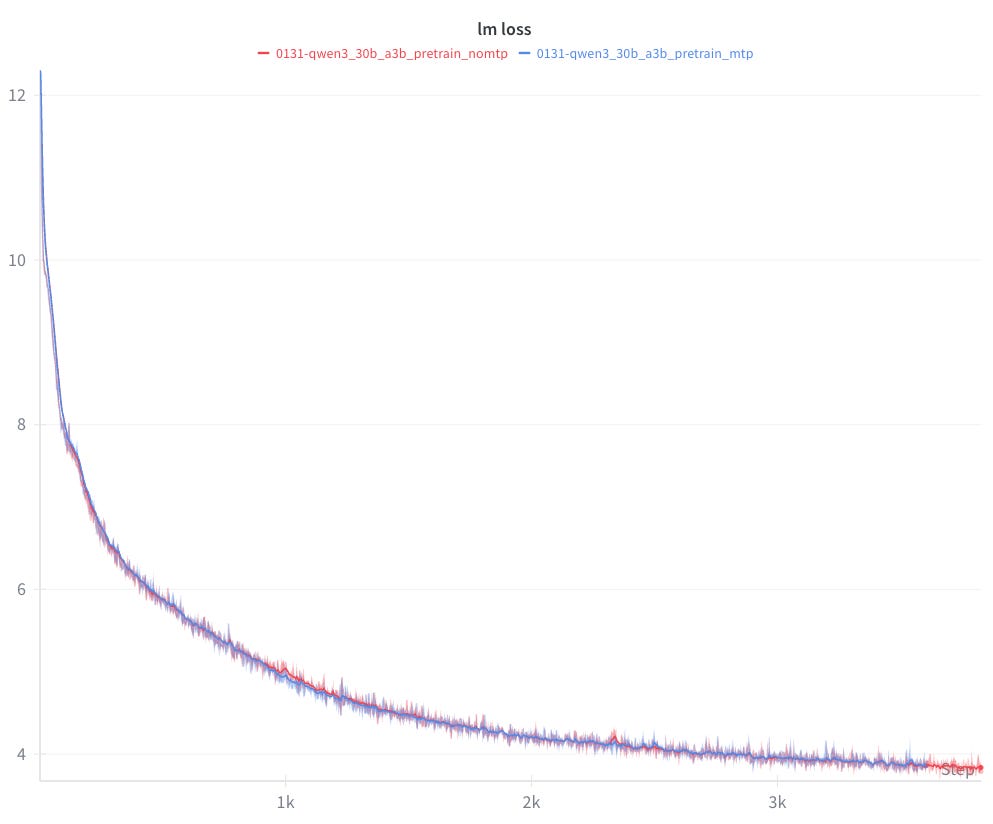

Multi-Token Prediction is not new in the academic sense. DeepSeek introduced it as a training objective in V3, where the model learns to predict not just the next token but the next several tokens at once through additional lightweight prediction heads.

What changed in 2026 is that those heads are now exposed at inference time and wired into vLLM as a first class speculative decoder under the spec_decode_config flag.

If you have heard of speculative decoding before, the mental model is similar. A small, fast model drafts a handful of tokens, and the big model verifies them in parallel. When the draft is correct, you get those tokens almost for free.

The crucial difference with MTP is that the “draft model” is not a separate model. It is a set of extra prediction heads that were trained jointly with the main model. They live in the same safetensors (ollama can’t run safetensors to my knowledge) weights, they share representations with the main forward pass, and they understand the main model’s distribution natively.

That is why MTP draft acceptance rates in vLLM are landing in the 70 to 85 percent range, where traditional speculative decoding with an ‘off the shelf’ draft model usually struggles to clear 50 percent.

IS MTP LOSSLESS? (THE QUESTION EVERYONE ASKS FIRST)

Yes. This is the most important property of MTP and the single biggest reason it is taking over.

MTP does not change the output of your model. Every draft token is verified by the full main model before it is committed to the response. If the verification disagrees with the draft, the bad token is rejected and the main model emits the correct one instead.

In other words, MTP is a pure speedup. The text you get out of Qwen 3.6 27B with MTP enabled is bit-for-bit identical to the text you would get without it, assuming the same sampling seed and the same sampling parameters.

This is fundamentally different from quantization tradeoffs, where you sacrifice a little quality to fit a model on smaller hardware (think MoE’s). With MTP you sacrifice nothing. You either accept the draft and save a forward pass, or you reject it and pay the original cost. There is no middle ground where quality degrades.

MTP VS TRADITIONAL SPECULATIVE DECODING VS MEDUSA AND EAGLE

Several search queries land here, so it is worth bodying.

Traditional speculative decoding pairs a big model with a separate, much smaller draft model. For example, you might use Qwen 0.5B to draft tokens for Qwen 32B.

vLLM has supported this for over a year. It works, but draft acceptance is mediocre because the small model does not share the big model’s internal representations, and you pay extra VRAM for the second model.

Medusa attaches multiple prediction heads to a frozen base model after training. It is essentially MTP but bolted on after the fact. vLLM supports Medusa. Acceptance is decent but typically lower than native MTP because the heads never participated in joint training.

EAGLE and EAGLE-2 propagate the base model’s hidden states into the draft head. They perform well, and vLLM ships first class EAGLE support, but they require a separately trained draft head that not every model author publishes.

Native MTP, as shipped in DeepSeek V3, DeepSeek V4, Qwen 3.6, and Gemma 4, is the cleanest version of the idea. The prediction heads are trained from the start as part of the same model. They get the highest acceptance rates, they require no second model, and they cost essentially zero extra memory because they were already in the checkpoint.

vLLM now supports all four paths under one unified speculative decoding interface. Native MTP is the one to reach for when the model author trained it, because the acceptance rate ceiling is highest.

WHY DRAFT ACCEPTANCE RATE IS THE ONLY NUMBER THAT MATTERS

When you read MTP benchmarks, ignore the raw tok/s number for a moment. The number to anchor on is draft acceptance rate.

Draft acceptance rate is the percentage of speculated tokens that the main model agrees with on verification. At 0 percent acceptance, you are actually running slower than baseline because of verification overhead. At 100 percent acceptance, you would get a linear speedup equal to your draft depth.

In practice, every accepted token saves you one full forward pass of the main model. So acceptance rate is what converts MTP from a theoretical trick into real wall clock speed.

vLLM exposes acceptance rate in its built in metrics endpoint, which is one of the practical advantages over llama.cpp.

Benchmarks in the last week are landing consistently above 80 percent acceptance on coding workloads with Qwen 3.6 served through vLLM, which is why the 2x to 3x speedup numbers are actually reproducible.

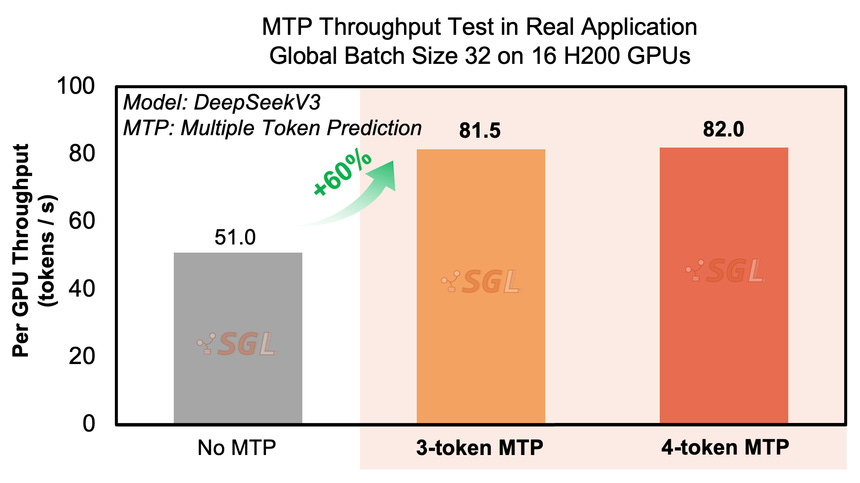

There is also a depth choice. MTP can speculate one token ahead, three tokens ahead, or further. The community has converged on three as the optimal draft depth for current Qwen and Gemma checkpoints. vLLM exposes this directly as num_speculative_tokens. Going deeper increases verification cost faster than acceptance falls off, so you get diminishing returns past depth 3.

Acceptance rate also varies by workload. Code generation, with its highly predictable syntactic patterns, gets the highest acceptance. Creative writing and dialogue typically run 10 to 15 percentage points lower because token-level predictability drops.

WHICH MODELS ACTUALLY SUPPORT MTP IN vLLM TODAY

As of mid May 2026, the production ready MTP enabled models in vLLM are:

DeepSeek V3 and V4. The original implementations. Native MTP support was first class in vLLM before any other engine. Very high acceptance rates, and well suited to multi-GPU tensor parallel deployments.

Qwen 3.6 27B (dense) this is my personal favorite pick for a single 3090. The current sweet spot for 24GB to 48GB consumer GPUs. Officially supported in vLLM 0.9 and later with the native MTP path enabled.

Qwen 3.6 35B A3B (mixture-of-experts). Total parameters are 35 billion, but only 3 billion are active per token. vLLM’s expert parallelism handles MoE routing efficiently, and the MTP heads compose cleanly on top.

Gemma 4 26B. Google’s MTP-trained checkpoint. Fully supported in vLLM with AWQ and FP8 quantization paths.

NVIDIA Star Elastic. Newer, less-tested, but interesting because a single checkpoint can be sliced into 30B, 23B, or 12B at inference time. vLLM has experimental support behind a feature flag.

If you see a community fine tune labeled “MTP Preserved” or “Native MTP,” it means the fine tuner did the extra work to keep the prediction heads intact in the safetensors. Many popular fine-tuning recipes silently strip them.

A QUICK NOTE ON MIXTURE-OF-EXPERTS AND THE A3B NOTATION

The “A3B” suffix on Qwen 3.6 35B A3B trips a lot of people up.

It means “active 3 billion parameters.” The model has 35 billion total parameters, but for any given token, only about 3 billion are actively used. A router network picks which experts to engage per token.

The practical consequence is that VRAM usage is dictated by the total parameter count, while inference speed is dictated by the active parameter count. That is how Qwen 3.6 35B A3B can run as fast as a dense 3B model while having the intelligence of something much larger.

MTP and mixture-of-experts compound nicely in vLLM. The MoE router cuts the per-token cost, MTP cuts the per-step count, expert parallelism distributes the routing across GPUs, and you end up with throughput that would have seemed impossible on consumer hardware a year ago.

THE HARDWARE TIER TABLE

vLLM is GPU-first and designed for serving rather than single stream chat. The tier numbers below assume you are running the official OpenAI compatible server with paged attention and that you want sustained throughput, not just a single quick reply.

24GB VRAM TIER (RTX 3090, 4090, 5090)

Model: Qwen 3.6 27B AWQ 4-bit.

Backend: vLLM 0.9+ with native MTP and num_speculative_tokens=3.

Result: 110 to 135 tokens per second at single-user throughput, 2x to 3x baseline, 64K context window with FP8 KV cache enabled.

Why it matters: this is the first time a single consumer GPU has hit triple-digit tokens-per-second on a dense 27B-parameter model at usable quality, while also serving concurrent requests with continuous batching.

48GB VRAM TIER (DUAL 3090, A6000, RTX 6000 ADA)

Model: Qwen 3.6 27B at FP8 or GPTQ INT4.

Backend: vLLM with tensor parallelism (tp=2 on dual cards) and native MTP.

Result: 2.5x faster inference, 128K to 200K context window, sustained throughput across 8 to 16 concurrent users.

The practical caveat at this tier: when you go above 128K context, switch from FP8 KV cache to unquantized to avoid quality drift in long agent loops.

80GB VRAM TIER (A100 80GB, H100, H200)

Model: DeepSeek V4 or Qwen 3.6 35B A3B at FP8.

Backend: vLLM with tp=1 or tp=2, native MTP, expert parallelism for MoE models.

Result: 2.5x to 3x speedup on DeepSeek, with batched throughput in the thousands of tokens per second range.

This is vLLM’s natural home. The engine was built for exactly this hardware tier, and MTP turns it into the cheapest way to host frontier class open-weight models on your own infrastructure.

AMD ROCm TIER (7900 XTX, MI300X)

Model: Qwen 3.6 27B AWQ or FP8.

Backend: vLLM-ROCm via Lemonade, with native MTP recently added as an experimental path.

Result: Comparable token throughput to the NVIDIA equivalents, with the caveat that MTP support on ROCm is one release cycle behind CUDA.

Why this matters: vLLM-ROCm in Lemonade gives AMD users a real serving path for the first time. If you bought a 7900 XTX or an MI300X and have been stuck on llama.cpp, this is your upgrade.

A note on what is missing from this table.

vLLM does not run on Apple Silicon (dorks, buy a ThinkPad and run Linux). If you are on a Mac Studio or MacBook Pro, MTP is available through llama.cpp on Metal or through MLX nightly builds, but vLLM is not your engine. The rest of this guide does not apply to your hardware. But, you can apply most to llama.cpp or ollama.

vLLM also pre-allocates GPU memory aggressively. It is not a great fit for 12GB cards, where small KV cache budgets defeat the purpose of continuous batching.

If you are on a 3060 or 4070, llama.cpp with the MTP pull request is the better answer.

If you have a 4080 or better, vLLM is super meta. (im running on 5090 its butter).

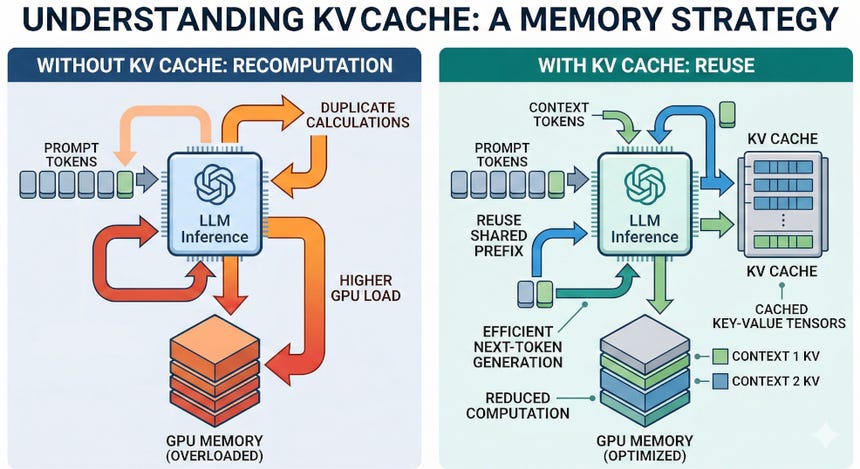

A QUICK PRIMER ON KV CACHE AND PAGED ATTENTION

When you generate text with a transformer, the model keeps a running cache of all previously computed attention keys and values. That cache grows linearly with context length and dominates memory at long contexts. (read this again, how all LLMs [ChatGPT] work).

QUANTIZATION QUALITY: WHICH FORMAT SHOULD YOU ACTUALLY RUN?

vLLM uses a different quantization ecosystem than llama.cpp. There is no GGUF here. The formats that matter in vLLM 0.9+ are FP8, AWQ, GPTQ, and INT4.

FP8 is the new production default. Quality is indistinguishable from BF16 in blind testing, and the speed and memory advantages are real on Hopper, Ada Lovelace, and MI300.

AWQ 4-bit is the practical sweet spot for 24GB consumer cards. Detectable but minor quality regression compared to FP16, with significant memory savings and excellent vLLM kernel support.

GPTQ INT4 is similar to AWQ in size and quality but has slightly slower kernel performance in current vLLM builds.

INT4 group-quantized formats lower than AWQ should be treated as experimental. The intelligence loss compounds badly in agent loops, where each step’s small error feeds into the next step’s prompt.

The general rule: if you are on an H100 or newer, run FP8 and stop thinking about it. If you are on a 3090 or 4090, run AWQ 4-bit unless you specifically need the last 1 to 2 points of benchmark quality.

HOW TO ACTUALLY RUN THIS TODAY

There are three things you need.

First, a model with MTP layers preserved. You want safetensors, not GGUF. Look for releases on Hugging Face tagged with “MTP” or “Native MTP Preserved.” Qwen has published official MTP-enabled checkpoints for the 3.6 series, and Unsloth has community maintained mirrors with additional fine tunes. I prefer HuggingFace.

Second, vLLM 0.9 or newer. Install with pip in a fresh Python 3.11 or 3.12 virtual environment. On AMD, install the ROCm wheel via Lemonade, which handles the dependency dance for you.

Third, the right server flags. A working command for a 24GB card looks like this:

vllm serve Qwen/Qwen3.6-27B-MTP \

quantization awq \

speculative-config ‘{”method”:”mtp”,”num_speculative_tokens”:3}’ \

max-model-len 65536 \

kv-cache-dtype fp8 \

gpu-memory-utilization 0.92 \

enable-prefix-caching

That gives you an OpenAI compatible endpoint on port 8000, native MTP with depth 3, FP8 KV cache, 64K context (even 32K should be fine for just 1 person), and prefix caching for agent loops (holy grail).

For multi-GPU setups, add tensor-parallel-size 2 (or 4, or 8) to scale across cards (I have yet to test this, but I will one day). For AMD, swap the awq quantization for fp8 or the rocm native quant of your choice.

DOES MTP WORK WITH OTHER ENGINES?

This is the second most common search question, so the short answer for each:

llama.cpp has native MTP via a recent pull request, not yet merged to main as of this writing. It is the right answer for single user inference, Mac users, and anyone on a 12GB or smaller GPU.

ExLlamaV3 does not yet ship native MTP. Its speculative decoding implementation works with a separate draft model.

MLX (Apple Silicon native) has MTP support in nightly builds, with performance still trailing llama.cpp on Metal. For Mac users today, llama.cpp is the right choice (I think Mac should go out of business personally, just expert fumblers).

Ollama and LM Studio are wrappers around llama.cpp and will gain MTP support once it merges upstream. Neither runs on vLLM.

For production serving, multi-user workloads (aka GigaChads), multi GPU deployments, or anything that needs throughput rather than just single stream speed, vLLM is the clear answer. Which means, its also the answer for enterprises; so, mastering it now will apply directly to job experience at IBM or NVIDIA.

WHAT THIS CHANGES

The honest answer is that hosting your own frontier class open weight model has been a promise more than a reality for the last year. The models were smart enough. The serving stack was too slow per dollar.

At 135 tokens per second on a single 3090 with batched throughput in the thousands on a dual 3090 rig, the math changed. Workloads that previously required cloud inference at frontier model prices now run on hardware that pays for itself in three to six months against a typical Claude or GPT subscription bill.

This is the moment to take self hosted inference seriously again if you had written it off after the last benchmark cycle.

The MTP work is moving fast, vLLM is shipping native support for new models almost weekly, and the cost curve has finally crossed the line where on device beats the API for the workloads most teams actually run.

If you are building anything that depends on cheap, private, high throughput inference, vLLM with MTP is the stack worth learning today...

What do you think?

God-Willing, see you at the next letter

GRACE & PEACE