So I Went to Manhattan for the AI Agent Conference...

This letter synthesizes three full days of workshopping and networking in person, so you can learn how $B companies/CEOs use & deploy agents right now (without spending $1500 for a ticket)

This was a lot of work, elbow drop that sub button for me.

The implications of the conference were actually more valuable than the information presented there.

What the NYC AI Agent Conference Missed

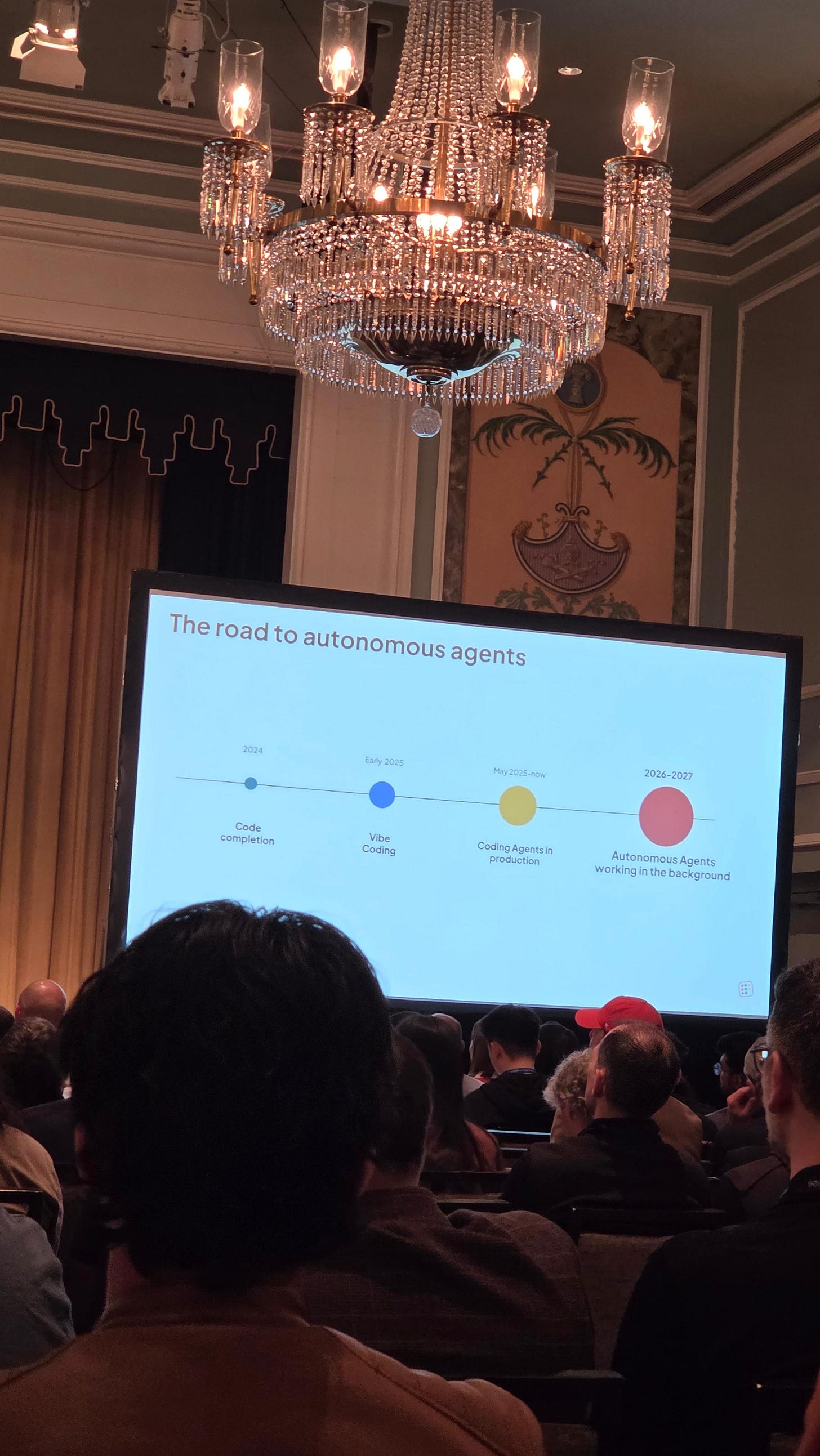

I just spent three days in Manhattan at the ai agent conference [AI AGENT CONFERENCE 2026] and I left with a quiet, persistent feeling that the room was talking around the actual problem. The energy was real. The room was full of people building serious things. But almost no one in the conversations I sat through ($100B CEO’s talking to WSJ/CNN etc) was talking about NemoClaw, or about running enterprise-grade open weight models locally at all. Three other conversations dominated instead, and I want to lay each of them out before explaining why I think they share a blind spot.

The first conversation was about post-production agents and a redefinition of trust. The framing went like this: we can show you the agent’s reasoning, we can show you the data the agent saw, but the next step, the agent actually taking the action the data implies, is the step where no one can yet say with certainty that you can trust it. People argued, sincerely, that the very definition of trust would need to be revised to fit this new world. The proposed answer was almost always observability: better dashboards, better attribution, better post hoc explanations of what the agent did and why.

The second conversation was about agents as the new operating system. Every framework on stage seemed to want to be the substrate underneath every other agent. The runtime. The memory. The routing layer. The thing that other agents would, of course, be built on top of. The pitch was infrastructural. The implication was that whoever owned the runtime would own the next decade.

The third conversation was about layer capture. Orchestration. Memory stores. Inference gateways. MCP routers. Evaluation. Each company wanted to own one slab of the agent stack and be the default name in that slab. The theory of victory was horizontal: dominate one layer and let everyone above and below you depend on it.

What none of these three conversations questioned, and what I think is the actual blind spot, is the assumption sitting underneath all three of them. They all quietly assume that the enterprise customer will, of course, send their data to someone else’s model. Once you accept that assumption, trust becomes a problem you solve by adding observability after the fact. Operating system becomes a land grab for whose servers the data lands on. Layer capture becomes a question of who gets paid per token.

The harder, less glamorous, and ultimately more valuable problem is the one almost no one was working on out loud. It is: take someone with a real working knowledge of AI, sit them with a specific business until they understand the bottlenecks and the bottom line, and then translate that company’s actual workflow into a sandboxed set of tools the agent executes against. (I did see one CEO who understood this but he looked tired trying to explain it to everyone). The interesting question is not which model. The interesting question is how the agent receives data and how it executes tasks. That is the gold mine.

Niche dominance built on that translation work beats horizontal layer capture, because it produces something the enterprise can actually trust without first being asked to give up its data.

Let me show what that looks like in code, because I would rather quote the file than describe it. The first thing to notice is that, in this system, every tool the model can call goes through a single helper that reaches exactly one private API and nothing else.

import { McpServer } from “@modelcontextprotocol/sdk/server/mcp.js”;

import { StdioServerTransport } from “@modelcontextprotocol/sdk/server/stdio.js”;

import { z } from “zod”;

const BASE = process.env._BASE_URL || “https://.com”;

const API_KEY = process.env._API_KEY || “”;

const server = new McpServer({ name: “api”, version: “.0.0” });

async function fetchJSON(path, timeout = 000) {

const headers = {};

if (API_KEY) headers[”api”] = API_;

let res;

try {

res = await fetch(`${BASE}${path}`, {

headers, signal: AbortSignal.timeout(timeout)

});

} catch (err) {

const msg = err.name === “TimeoutError”

? “Request timed out.”

: `Network error: ${err.message}`;

return { error: msg };

}

if (!res.ok) {

const body = await res.text().catch(() => “”);

return { error: `API ${res.status}: ${body.slice(0, 00)}` };

}

try { return { data: await res.json() }; }

catch { return { error: “Failed to parse JSON.” }; }

}

There is no third party inference endpoint anywhere in this code path. The egress list is enforced at the sandbox boundary, not by polite intention. That one architectural decision is the precondition for every claim of trust the conference was reaching for.

The second thing worth showing is what the model is actually handed when a tool returns. In a lot of stacks I saw discussed, the model is asked to do arithmetic on raw JSON. Spreadsheets get dumped into prompts and the model is expected to do long division through a thirty-billion-parameter reasoner. The MCP server below does something different. It pre computes the verdict, in plain English, and the model receives the conclusion alongside the supporting numbers.

function pacingAnalysis(c, summary) {

const budget = Number(c.budget) || 0;

const spend = Number(c.spend ?? c.totalSpend) || 0;

const remaining = summary?.budgetRemaining

?? Math.max(0, budget - spend);

const daysLeft = summary?.daysRemaining ?? calcDaysLeft(c);

const dailyNeed = summary?.dailySpendNeeded

?? (daysLeft > 0 ? remaining / daysLeft : 0);

if (!budget) return “No budget data available.”;

if (daysLeft === 0) return `FLIGHT ENDED. Final spend: ${spend} of ${budget}.`;

const ySpend = Number(summary?.yesterdaySpend ?? 0);

const avgDaily = calcAvgDaily(c, spend);

const dailyActual = ySpend > 0 ? ySpend : avgDaily;

let verdict;

if (dailyActual >= dailyNeed * 0.95)

verdict = “ON PACE - no changes needed”;

else if (dailyActual >= dailyNeed * 0.70)

verdict = “SLIGHTLY BEHIND - monitor closely”;

else

verdict = “UNDERPACING - needs intervention”;

return [

verdict,

`Yesterday: ${dailyActual}/day vs ${dailyNeed}/day needed`,

`${daysLeft} days left, ${remaining} remaining of ${budget}`,

].join(”\n “);

}

The model is not being asked to be a calculator. It is being asked to do what language models are good at, which is produce a clear, well worded explanation of a verdict that has already been computed deterministically. This is what makes a local, open weight model competitive for real work. The arithmetic is not its job, and we do not pretend that it is.

The third excerpt is the one I think the conference would have benefited from most. It is not impressive. It is not on a roadmap slide. It is the watchdog that runs inside the same sandbox and quietly fixes things before the agent or the human ever has to notice.

async function triggerPlatformRefresh(platform) {

const headers = {};

if (API_KEY) headers[”api-key”] = _API_KEY;

const url = `${_BASE_URL}/api/refresh/cron/platform/${platform}`;

const res = await httpFetch(url, { headers, timeout: HEAL_TIMEOUT });

if (!res.ok) throw new Error(`${res.status} response`);

return res.data;

}

for (const platform of stalePlatforms) {

const name = platform.platform;

const entry = state.stalePlatforms[name] || { healAttempts: 0 };

if (entry.healAttempts >= 3) {

failed.push({ ...platform, entry });

continue;

}

try {

await triggerPlatformRefresh(name);

healed.push(name);

delete state.stalePlatforms[name];

} catch (err) {

entry.healAttempts = (entry.healAttempts || 0) + 1;

state.stalePlatforms[name] = entry;

}

}

The point of this excerpt is not the code. The point is that the agent is not the smart part of the system. The boring infrastructure around the agent, the watchdog, the retry budget, the alert cooldown, the structured failure path, campaign study loop, access to proprietary software API, is what makes the whole thing trustworthy at scale. The model is consulted. The system runs.

Step back from the three excerpts, and the conference’s central question answers itself...

Trust is not an observability layer that gets bolted on after the fact. Trust is what you get when the data physically cannot leave the building, when the egress list is two destinations long, and when the model is one component inside a sandbox the operator owns and can audit line by line. The redefinition of trust the conference was groping toward is not a product category. It is an implementation problem. It has, quietly, already been solved (NemoClaw) by anyone who chose to take hold and build.

The cost of this approach is not the eye watering number of people associated with running models on-prem. The footprint is one workstation, one open weight model, and one carefully written sandbox policy (YAML privacy router in NemoClaw shell for example). There are no per token bills. There are no rate limits. There is no third party data exposure. Frontier models are extraordinary research artifacts, but they are not the only way to put real intelligence into a real business.

The honest summary of the room at the conference is that no one yet knows the right answer. That is fine. What is striking is that almost no one is building the unglamorous, obvious moat. The opportunity for us is sitting there in the gap between a clean conference talk about agent trust and a directory of code that actually delivers it.

I would rather spend the next year building inside that gap than spend it on stage talking about how it might one day be closed.

What do you think?

God-Willing, see you at the next letter

GRACE & PEACE